Last month, the DeepSpeed Team announced ZeRO-Infinity, a step forward in training models with tens of trillions of parameters. In addition to creating optimizations for scale, our team strives to introduce features that also improve speed, cost, and usability. As the DeepSpeed optimization library evolves, we are listening to the growing DeepSpeed community to learn […]

DeepSpeed - Microsoft Research

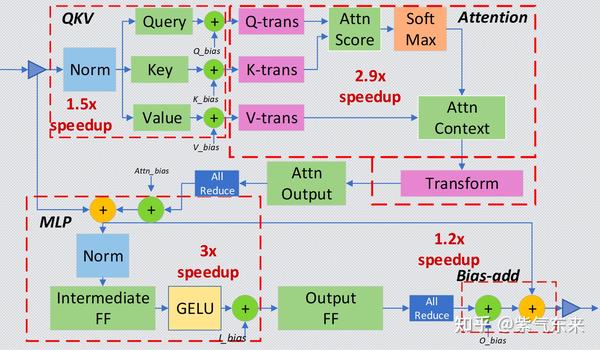

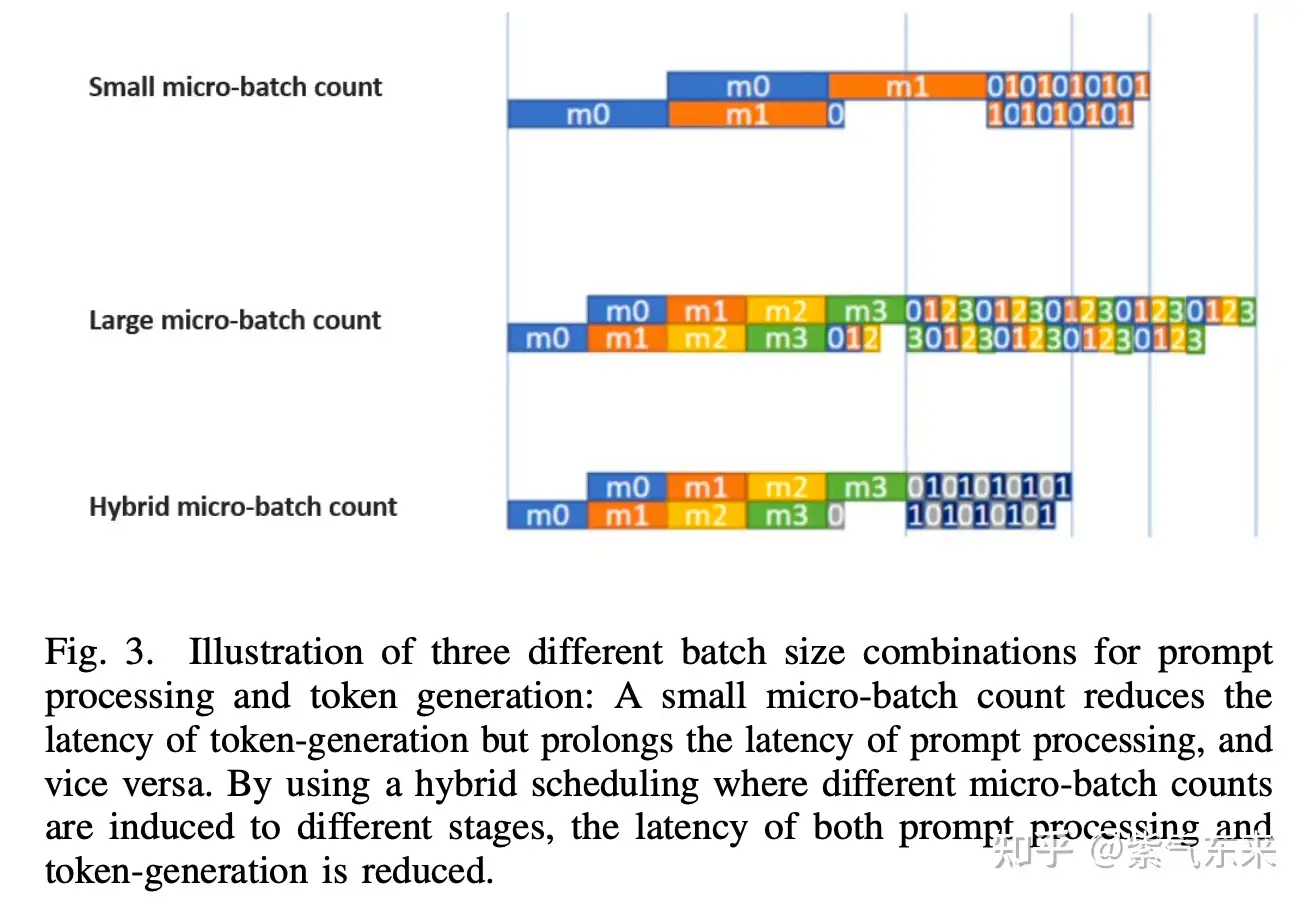

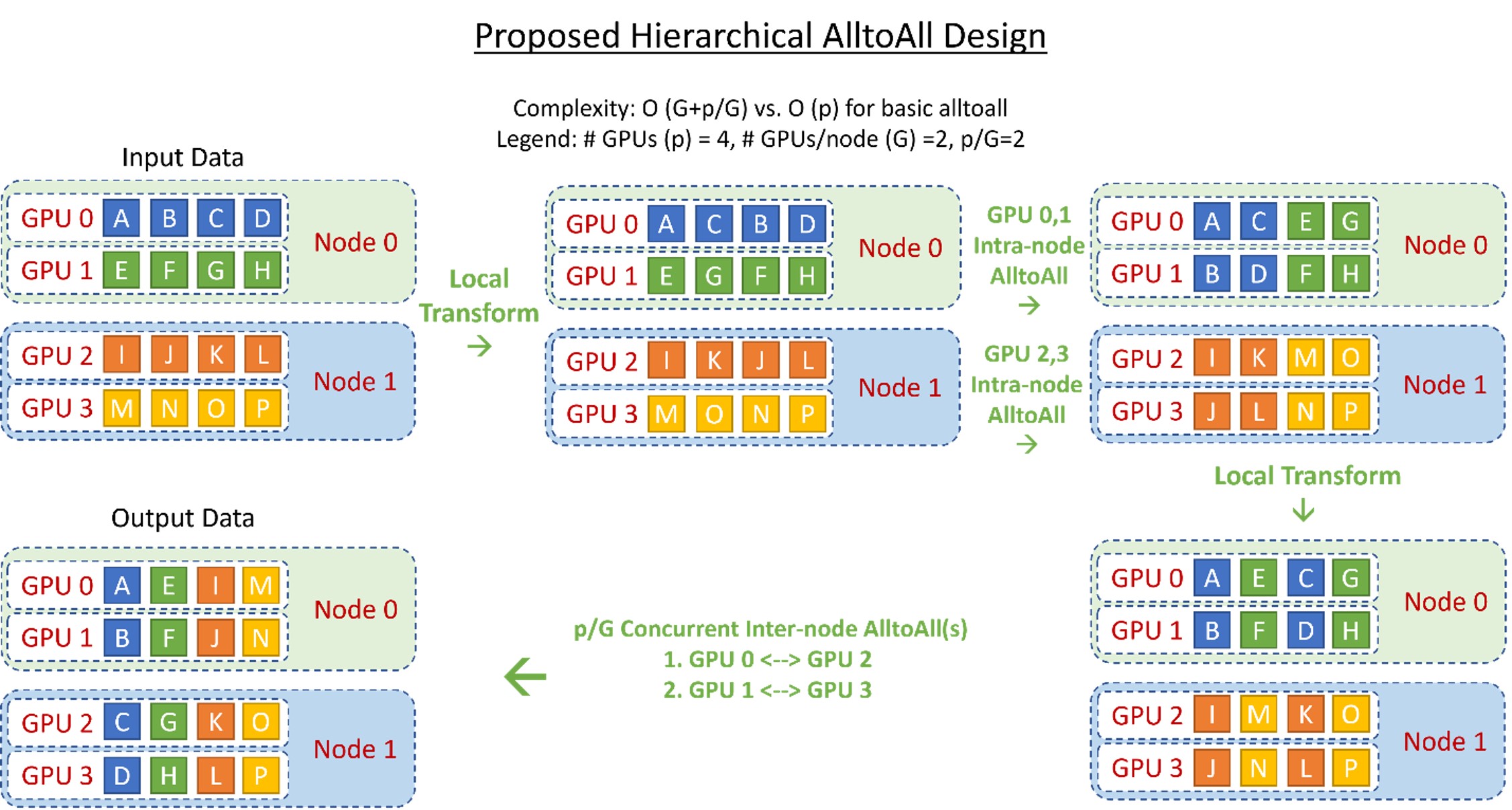

LLM(十二):DeepSpeed Inference 在LLM 推理上的优化探究- 知乎

Introducing Audio Search by Length in Marketplace - Announcements

LLM(十二):DeepSpeed Inference 在LLM 推理上的优化探究- 知乎

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

DeepSpeed: Advancing MoE inference and training to power next-generation AI scale - Microsoft Research

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

DeepSpeed Inference - Enabling Efficient Inference of Transformer Models at Unprecedented Scale, PDF, Graphics Processing Unit

Microsoft's DeepSpeed enables PyTorch to Train Models with 100-Billion-Parameter at mind-blowing speed, by Arun C Thomas, The Ultimate Engineer

ZeRO & DeepSpeed: New system optimizations enable training models with over 100 billion parameters - Microsoft Research

DeepSpeed: Extreme-scale model training for everyone - Microsoft Research