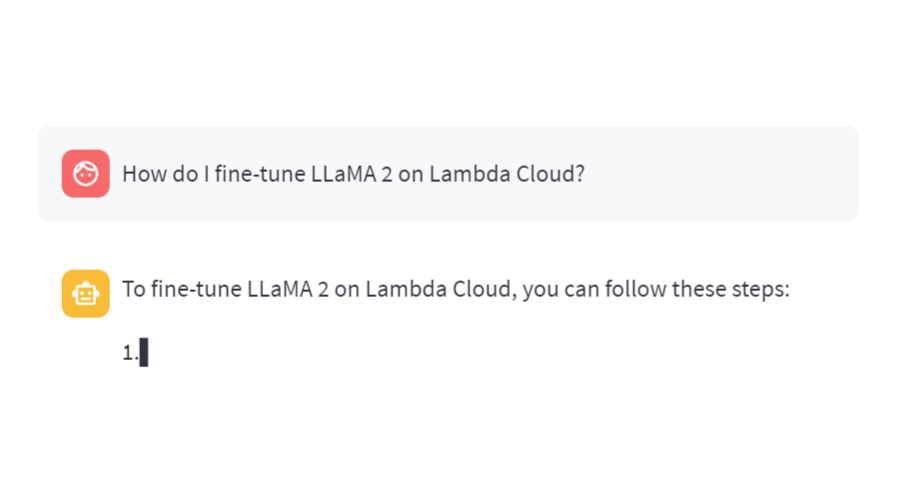

This blog post provides instructions on how to fine tune LLaMA 2 models on Lambda Cloud using a $0.60/hr A10 GPU.

AI Bots as difficult customers—generating synthetic customer conversations using Llama-2, Kafka and LangChain

The Lambda Deep Learning Blog

Mitesh Agrawal on LinkedIn: Careers at Lambda

Fine-tune Llama 2 with Limited Resources •

Mitesh Agrawal on LinkedIn: Weights & Biases and Lambda Announce Strategic Partnership to Accelerate…

Fine-tune Llama 2 with Limited Resources •

Fine-tune Llama-2 with SageMaker JumpStart, by Michael Ludvig

Mitesh Agrawal on LinkedIn: Fine tuning Meta's LLaMA 2 on Lambda GPU Cloud

Optimizing Llama-2 Fine-Tuning in Google Colab for Efficient GPU Usage

Best Open Source LLMs of 2024 — Klu

The Lambda Deep Learning Blog

Mike Mattacola on LinkedIn: Train a Foundation Model on Lambda's Cloud

Zongheng Yang on LinkedIn: Serving LLM 24x Faster On the Cloud with vLLM and SkyPilot

How to Install Llama 2 on Your Server with Pre-configured AWS Package in a Single